Consumer Products Company Turnaround

Client: Fasco — ventilation products manufacturer, ~$85M annual sales

Engagement type: Manufacturing rehabilitation

The situation

Fasco was a ventilation products manufacturer doing roughly $85 million in annual sales, but the company was financially underperforming to the point that closure was on the table unless performance could be turned around.

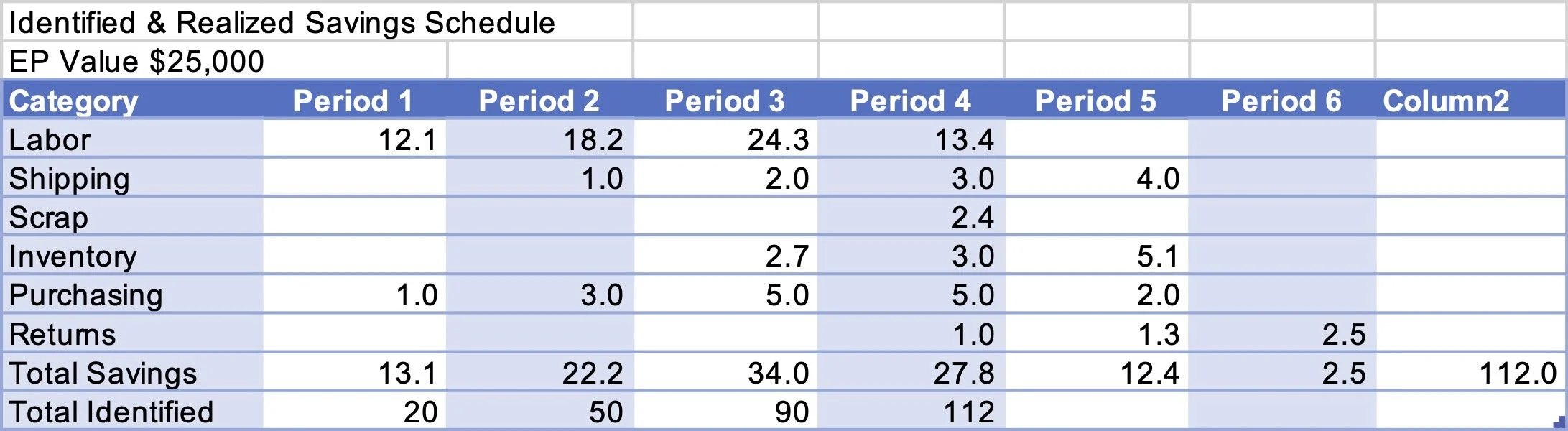

The product line was unusually long-tailed: 1,400 SKUs, of which 50 generated half of sales and 245 generated 80%. Inventory had ballooned to nearly $30 million against $85 million in sales — 120 days on hand, a turn rate of 2.8 against an industry-normal range of 12 to 15. The owner's target was a return-on-assets improvement from 4% to 10%, implying $2.7 million in annual cost savings at the existing sales rate.

What we found

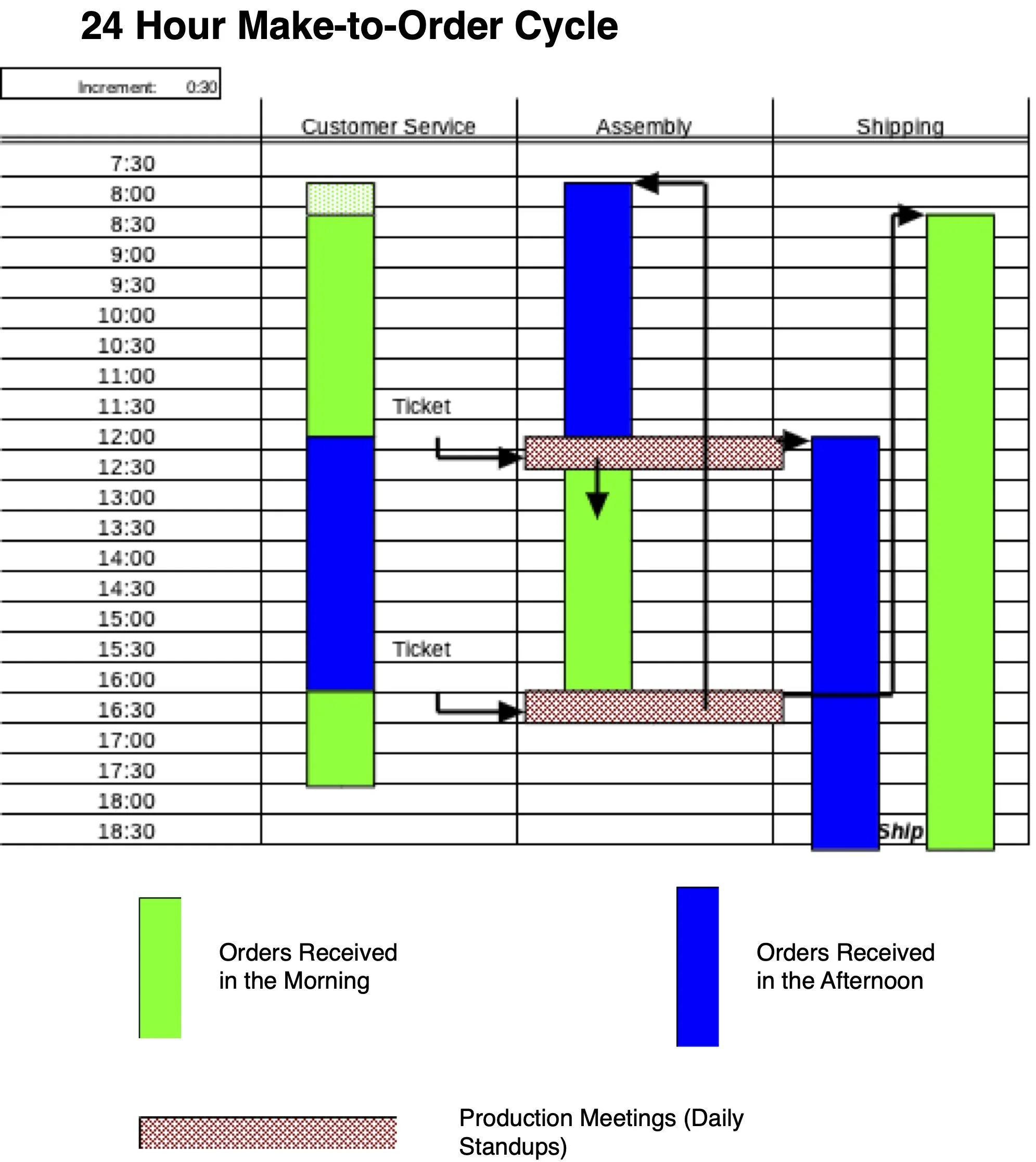

The root cause was structural, not operational. Manufacturing and assembly were scheduled make-to-inventory against sales forecasts, with assembly work organized along traditional conveyor-belt lines. That scheduling model was producing the inventory overhang directly, and the conveyor layout made any shift to a more responsive model difficult. Defect rates were also running well above target, adding material and return costs on top of the inventory problem.

We concluded the savings target was reachable, but only by moving to make-to-order — which meant rebuilding both the scheduling system and the physical assembly layout.

What we did

We restructured the operation around make-to-order production with one-piece flow cells designed for single-cycle changeover, replacing the conveyor-belt assembly. Lot sizes dropped from production-batch quantities to 10. Resupply moved to a Kanban pull system. The new layout was engineered so assembly cycle time matched product takt time, allowing finished-goods inventory to deplete naturally as the new system ran. This yielded an FGI-free order-to-ship process in 24 hours.

Results

Inventory levels reduced by 90%

Changeover time cut to one production cycle — under 5% of its previous duration

Manpower reduced 20% at equal volume, with variable crewing between 1 and 8 workers per cell

Zero defects achieved on the new line

The owner exceeded the original savings target and sold the company at a substantial multiple of the investment

Patient Safety Analytics for a State Health Department

Client: State-level public health department, patient safety program

Engagement type: Healthcare AI

The situation

A state-level patient safety program was overwhelmed by the volume of medical analysis reports submitted by hospitals and outpatient surgical facilities across the state following medical incidents. Inbound volume was running at roughly 200 pages of unstructured PDF text per week, on top of a backlog spanning years of prior submissions — a corpus of approximately 5,000 documents totaling around 30,000 pages.

The program needed to use this corpus the way it was intended to be used: to identify patterns across incidents, surface common factors in root cause analyses, and track trends by practitioner, provider, and procedure over the full history of the department's records. Doing that manually at this scale was no longer feasible. The analytical results also had to be reliable, repeatable, and fully HIPAA-compliant.

What we found

The natural first instinct — feed the corpus to a large language model and ask questions of it — does not work at this scale or for this use case. Asking an AI system to process 5,000 unstructured documents and 30,000 pages in a way that returns the same answer to the same question every time is an unrealistic expectation of current models. For a patient safety program whose findings need to withstand scrutiny, non-deterministic answers are not acceptable.

What was needed was a different architecture: one that separated the work of understanding the documents from the work of answering questions about them. Done correctly, that separation gives you the conversational ease of natural-language query without sacrificing reliability or repeatability — and lets the system handle a corpus that will only keep growing.

What we did

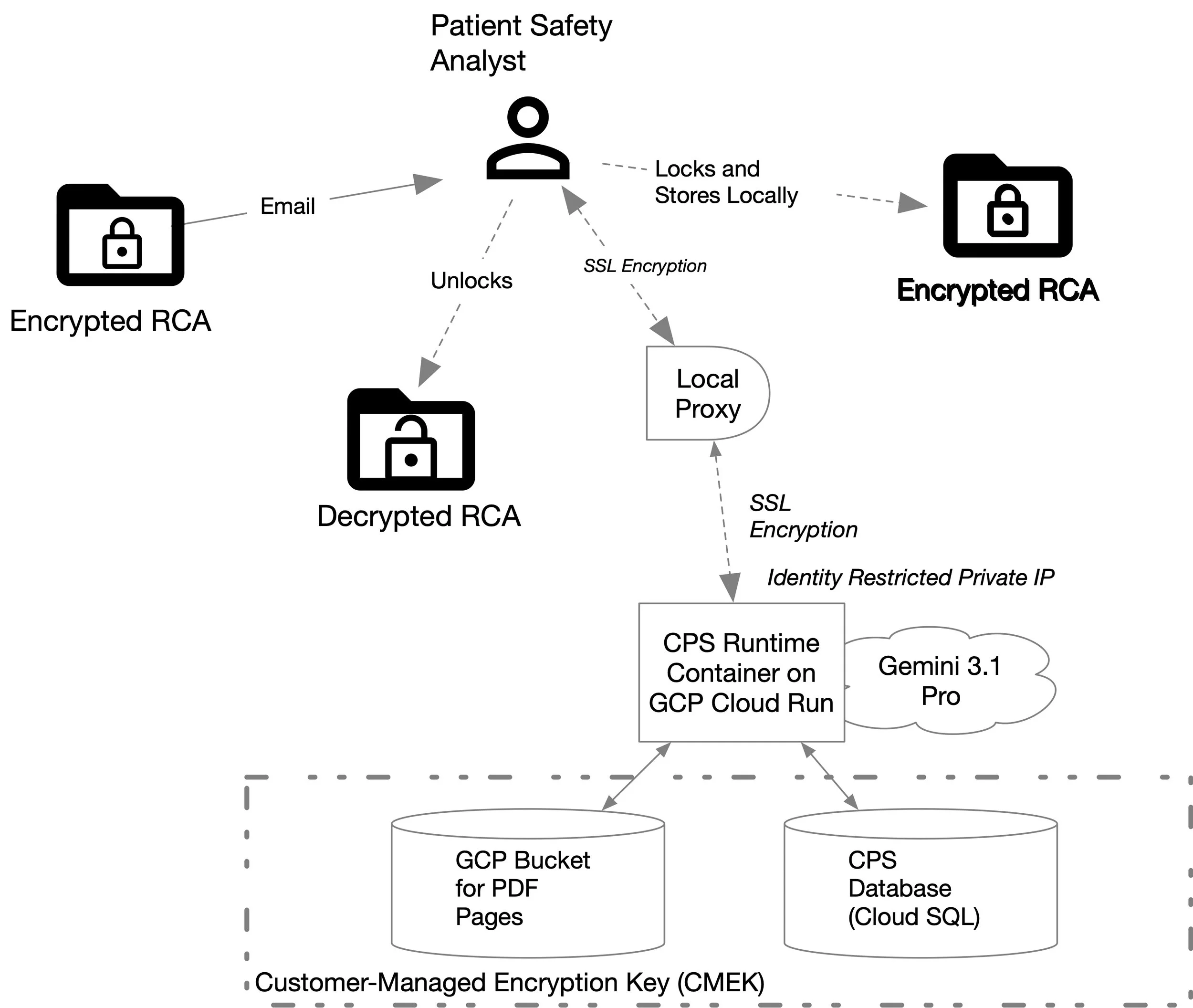

We built a HIPAA-compliant document analysis system structured in two layers.

The first layer performs lexicon-based indexing. Purpose-built text scanners and pre-processors read each document and extract structured metadata: agents, processes, medications, locations, dates and times, patients, and medical practitioners in each of their roles. Every extracted entity is indexed back to its instance in the source text. The result is a queryable structured database derived from the original unstructured corpus, with full provenance back to the underlying reports. All data is encrypted at rest and in transit.

The second layer is the natural-language query interface. A user asks a question in plain English. The system uses cosine-embedding semantic proximity analysis to map the user's intent onto the appropriate SQL query against the metadata indices. Because the query runs against structured data, the same question reliably returns the same answer — and because the indices preserve links back to source documents, every finding can be traced to the reports it came from.

Results

The patient safety program can now investigate arbitrarily many root cause analyses across the full document corpus, generate trend statistics and regression analyses on practitioners, procedures, and providers, and surface commonalities across incidents that would not have been discoverable through manual review. The system operates within the program's HIPAA compliance requirements and accommodates the ongoing inbound stream of new reports.

SamSurveyor: From Field Observation to Production Platform

Engagement type: Product development from observed need

Status: Live, multi-tenant platform — see samsurveyor.com

The origin

A state health agency was running outreach surveys to specific population segments — for example, surveying Hispanic mothers to understand whether they were receiving effective prenatal care. The agency wanted to apply AI to this work but was uncertain how to structure it safely and what the resulting data would be worth.

We observed the agency's survey operations and identified the real problem: the existing tools available for the job were structurally wrong for it. Form-based surveys produce thin, low-completion-rate data. Phone interviewers are expensive at the scale public health work requires. And the off-the-shelf voice agents being marketed for "conversational AI" — the kind used in HR screening and customer service — were built around an assumption that breaks down completely in field health research.

That assumption is that the respondent will follow the script. A mother answering a question about prenatal care doesn't follow a script. She references her mother-in-law, mentions the parking at the clinic, brings up something her cousin said, uses an analogy, circles back. Standard voice agents treat this as failure — off-topic input to be redirected or rejected. For the agency's work, that response loses the data, and often loses the respondent.

We concluded that the right approach was a conversational agent designed specifically to be tolerant of sideways talk, similes, tangents, and segues — controllable by the survey author at design time, multilingual by default, and deliverable through the channels people actually use rather than through a custom app the respondent has to install. That insight became the design brief for what is now SamSurveyor.

What it does

SamSurveyor is a conversational survey platform. Survey authors design surveys in a visual node-based editor; Sam, the conversational interviewer, conducts the resulting conversations with respondents and returns clean structured data.

The platform delivers surveys across SMS, WhatsApp, Facebook Messenger, web browser, voice call, and email — from a single survey design, with no separate work required for each channel. Respondents can be reached on whatever channel they prefer, or directed to a specific channel for surveys where it matters (a voice-only health interview, an SMS check-in for populations with limited smartphone access). Each respondent receives a personalized session; their preferred channel is remembered across deployments.

Surveys can be authored in any major language and delivered in the respondent's language without redesign. The same prenatal-care survey runs in English for one cohort and Spanish for another from the same source design.

What respondents experience is a conversation, not a form. They answer naturally. Sam extracts the structured data from whatever they say, validates by type, distinguishes genuine answers from non-answers, and won't move on from a required question until the data is actually captured. If a respondent stalls, wanders, or volunteers something adjacent, Sam handles it as a competent human interviewer would — gently keeping the conversation on track without making the respondent feel interrogated. Respondents can say "Hey Sam" to repeat a question, go back, or restart.

What authors get is structured data scoped to named variables they defined, with the interpreted value alongside a confidence score, voice recordings where audio capture is enabled, and a full conversational audit trail. Results stream into the Console in real time and into the Survey Explorer for analysis — distributions, comparisons across recipient groups, cross-tabulation, response trends. Export to CSV when it's time to hand off to external statistical tools.

What was novel in the build

Three architectural decisions distinguish what we built from what would have come out of a more conventional approach to the same problem.

Hybrid prompt structure. Rather than control Sam's conversational behavior through ever-longer natural-language prompts — the standard practice when LLMs first became available for this kind of work — we built the agent around a hybrid structure that combines natural-language text with structured JSON parameters. The text handles tone, framing, and conversational latitude. The JSON encodes the survey author's intent at each point in the conversation: which variable is being captured, what counts as on-topic for this question, what's a tolerable digression, what's a signal to redirect. The combination gives the agent conversational flexibility without losing track of what needs to be captured, and uses substantially less compute than achieving the same flexibility through prompt engineering alone.

A parametric scripting language for survey authors. Public health researchers, HR teams, and program managers — the actual users of a survey platform — are not engineers and should not need to be. We designed a scripting language that lets authors specify how Sam should behave at each node in the conversation: tolerant on emotional topics, persistent on factual ones, gracefully looping back when the conversation drifts. Branching logic, multilingual delivery, accessibility for visually impaired respondents, and channel routing are all expressed in the same author-controlled layer. The visual designer is a front end to this scripting language; sophisticated authors can work at the script level when they need to.

Channel-independent survey design. Most conversational survey tools are built around one delivery mode — a chatbot for web, an IVR for phone, a Messenger bot for Facebook. SamSurveyor was designed from the start so a single survey definition compiles to any of six delivery channels. The author writes the conversation once. The platform handles the differences in modality — text-only on SMS, text-and-voice on WhatsApp, full multimedia in the browser, fully spoken on a voice call — without the author needing to design separately for each. This is what makes it possible to serve mixed-channel populations (elderly residents by voice call, others by SMS, recent immigrants by WhatsApp) from a single deployment.

Together these decisions produce a platform where conversational flexibility, author control, and channel reach reinforce each other rather than trading off against each other — which is the central technical accomplishment of the work.